Cloud Integration Testing Made Easy: LocalStack + Testcontainers

Introduction

LocalStack offers a compelling solution for streamlining the development and testing of your AWS-based applications. In a previous post, we explored the seamless transition between AWS and LocalStack, to ensure our application behaves the same across all stages of its lifecycle. Now, let's delve into the crucial aspect of testing and understand how LocalStack empowers you to conduct thorough and reliable tests, ensuring the robustness of your AWS applications.

The State of Integration Testing with the Cloud

The primary goal of integration tests is to uncover issues that may arise when different components interact with each other, such as incorrect data transfer, incompatible interfaces, communication failures, or synchronization problems.

Cloud integration tests are particularly challenging due to the high complexity of the interconnected services, resource constraints, dependency management, deployment and provisioning intricacies, and potentially high costs. So how can we bring the ease and speed of unit tests into these integration tests? Ideally, we’d like to use a local setup where we can quickly spin up and deploy our services in an emulated environment that’s as close as possible to the real deal. By simulating real-world scenarios and testing the integration of various parts of the system, these tests help us identify and resolve issues early in the development process. This is where Testcontainers and LocalStack work beautifully together to bring you the best of integration tests and cloud services on your machine.

Testcontainers

If you're in the Java ecosystem, you're probably well aware of JUnit, and if you're here, I'm sure you've at least heard of Testcontainers before.

Testcontainers is a popular Java library that provides lightweight, disposable containers for running integration tests. It allows developers to easily create and manage isolated environments for testing applications that require dependencies like databases, message brokers, web services, or other external systems. And here is where the beauty of having LocalStack ship as a Docker image comes into play: it is now an official Testcontainers module, meaning you can easily write integration tests for your AWS-powered application. With every test run, you can invoke a disposable, drop-in replacement for AWS without needing to mock out the services or connect to a remote cloud. This greatly enhances the effectiveness of your AWS application's integration tests.

The application to test

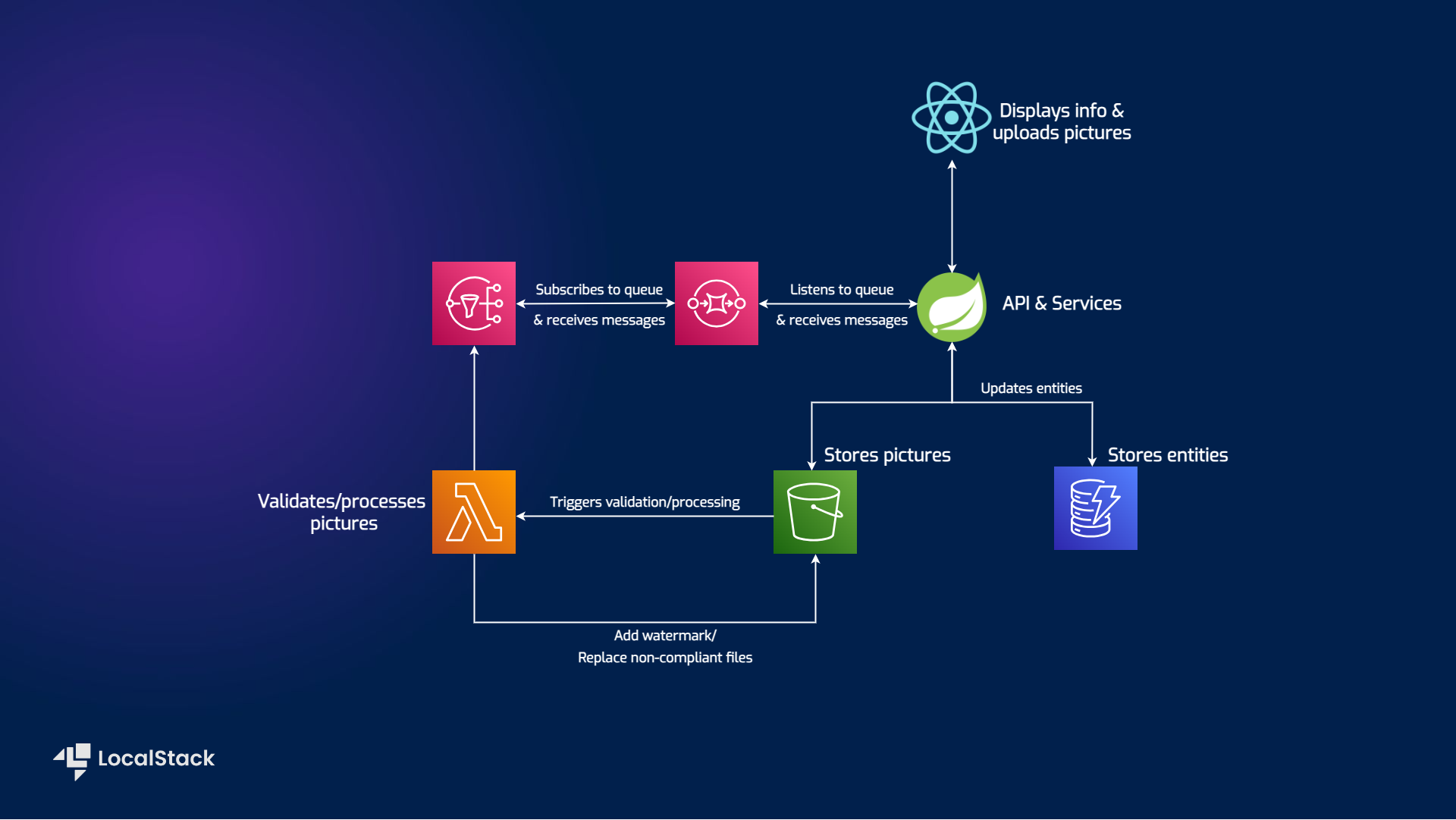

Let's have a quick look at the application's architecture and figure out what workflows we need to test. If you're looking for instructions on how to run the app, you'll find them here.

The application we want to test is a Spring Boot application dealing with CRUD operations executed on shipment entities - think of it like the Post app. The data is displayed with the help of a React frontend app. The user can only add and update shipments via direct REST calls using an API platform, such as Postman. By using the web app, one can delete shipments and add pictures corresponding to different entities. The pictures are validated to ensure they have a suitable format, and then a watermark is applied.

The AWS services involved are:

S3 for storing pictures.

DynamoDB for storing the entities.

Lambda function that will validate the pictures, apply a watermark and replace non-compliant files.

SNS that receives update notifications.

SQS, which subscribes to a topic and delivers the messages to the Spring Boot app.

Scenarios to be tested

By observing the diagram and the API, we find some workflows that need to be tested:

Upload a picture corresponding to a shipment and store it in the S3 bucket;

Download the picture from the S3 bucket;

Validate the existence of the entity before uploading a picture;

Add an entity to DynamoDB;

Get the entities from DynamoDB;

Delete an entity from DynamoDB;

Lambda function gets triggered by object uploads to the S3 bucket;

Lambda function adds metadata to processed objects;

Notification message passes through SNS and SQS to announce processing is finished.

Getting started

You can fork the repository yourself and follow along.

Prerequisites

Docker - for running LocalStack

Configuration

The very basic configuration for your project to start using Testcontainers is the maven dependency itself:

<dependency>

<groupId>org.testcontainers</groupId>

<artifactId>testcontainers</artifactId>

<version>1.18.3</version>

</dependency>

Testcontainers provides modules that simplify testing by integrating with test frameworks and offering optimized service containers. Each module is a separate dependency that can be added as needed, so we'll add the JUnit Jupiter integration and the LocalStack container.

<dependency>

<groupId>org.testcontainers</groupId>

<artifactId>junit-jupiter</artifactId>

<scope>test</scope>

</dependency>

<dependency>

<groupId>org.testcontainers</groupId>

<artifactId>localstack</artifactId>

<scope>test</scope>

</dependency>

Setup

The tests are found under the src/test/java/dev/ancaghenade/shipmentlistdemo/integrationtests folder. For the sake of simplicity and having smaller iterations, the tests are split into three categories:

using S3 and DynamoDB services - in

ShipmentServiceIntegrationTestclass.using S3, DynamoDB, and Lambda services - in

LambdaIntegrationTestclass.using all (S3, DynamoDB, Lambda, SNS,& SQS) services - in

MessageReceiverIntegrationTestclass.

The resources are created programmatically and have been abstracted away in LocalStackSetupConfigurations. As a superclass, it is also marked with @SpringBootTest and @Testcontainers annotations.

@Testcontainers

@SpringBootTest(webEnvironment = WebEnvironment.DEFINED_PORT)

public class LocalStackSetupConfigurations {

The @SpringBootTest annotation allows you to load the full Spring application context for performing the tests.WebEnvironment.DEFINED_PORT is used, which means the application will be started with a real embedded web server listening on a defined port (in this case, port 8081). You also have the option of using a random port and injecting it (by using @LocalServerPort in combination with WebEnvironment.RANDOM_PORT), but since there's not much conflict happening here, we'll keep it simple for running locally.

By using the @Testcontainers annotation, we enable a JUnit 5 extension that automatically manages the lifecycle of containers for us. In this specific case, the lifecycle of the container is linked to the lifecycle of the test class.

The Slf4jLogConsumer class from Testcontainers is used to stream the output of a container to an existing SLF4J logger instance. By creating an instance of Slf4jLogConsumer with the logger and then using the container's output, both standard out and standard error logs are redirected to the SLF4J logger.

Slf4jLogConsumer logConsumer = new Slf4jLogConsumer(LOGGER);

localStack.followOutput(logConsumer);

By default, these logs will be emitted at the INFO level, allowing you to conveniently capture and integrate container logs with your application's logging system. You can see the Lambda announcing the watermarking of the picture and sending its S3 key to the SNS topic:

2023-06-13T13:55:17.065+02:00 INFO 24958 --- [stream-33387850] d.a.s.i.LocalStackSetupConfigurations : STDOUT: 2023-06-13T11:55:17.056 DEBUG --- [ asgi_gw_1] l.s.a.i.version_manager : > Watermark has been added.

2023-06-13T13:55:17.065+02:00 INFO 24958 --- [stream-33387850] d.a.s.i.LocalStackSetupConfigurations : STDOUT: 2023-06-13T11:55:17.056 DEBUG --- [ asgi_gw_1] l.s.a.i.version_manager : > Published to topic: arn:aws:sns:us-east-1:000000000000:update_shipment_picture_topic

And a little lower, you will find that the Spring Boot application has received the message from the SQS queue it was listening on:

2023-06-13T14:36:12.144+02:00 INFO 25169 --- [ream-1992011100] d.a.s.i.LocalStackSetupConfigurations : STDOUT: 2023-06-13T12:36:12.117 INFO --- [ asgi_gw_2] localstack.request.aws : AWS sqs.SendMessage => 200

2023-06-13T14:36:12.144+02:00 INFO 25169 --- [ream-1992011100] d.a.s.i.LocalStackSetupConfigurations : STDOUT: 2023-06-13T12:36:12.118 DEBUG --- [ asgi_gw_1] l.services.sqs.models : de-queued message SqsMessage(id=9e4444cc-1ff4-4334-affd-b1c737d2bb19,group=None) from arn:aws:sqs:us-east-1:000000000000:update_shipment_picture_queue

2023-06-13T14:36:12.144+02:00 INFO 25169 --- [ream-1992011100] d.a.s.i.LocalStackSetupConfigurations : STDOUT: 2023-06-13T12:36:12.123 INFO --- [ asgi_gw_1] localstack.request.aws : AWS sqs.ReceiveMessage => 200

2023-06-13T14:36:12.305+02:00 INFO 25169 --- [ream-1992011100] d.a.s.i.LocalStackSetupConfigurations : STDOUT: 2023-06-13T12:36:12.303 DEBUG --- [ asgi_gw_3] l.s.a.i.version_manager : Got invocation result for invocation '918104f1-a094-4932-931b-d4194482e011'

2023-06-13T14:36:12.306+02:00 INFO 25169 --- [ream-1992011100] d.a.s.i.LocalStackSetupConfigurations : STDOUT: 2023-06-13T12:36:12.303 DEBUG --- [ asgi_gw_3] localstack.utils.threads : start_thread called without providing a custom name

2023-06-13T14:36:12.306+02:00 INFO 25169 --- [ntContainer#0-1] d.a.s.controller.MessageReceiver : Message from queue{"Type": "Notification", "MessageId": "c30dfa04-4aaa-4ed9-86b5-c469dbe62c43", "TopicArn": "arn:aws:sns:us-east-1:000000000000:update_shipment_picture_topic", "Message": "3317ac4f-1f9b-4bab-a974-4aa9876d5547/83abfc86-8ee9-408e-a2d6-cf01fb876119-cat.jpg", "Timestamp": "2023-06-13T12:36:12.010Z", "SignatureVersion": "1", "Signature": "EXAMPLEpH+..", "SigningCertURL": "https://sns.us-east-1.amazonaws.com/SimpleNotificationService-0000000000000000000000.pem", "UnsubscribeURL": "http://localhost:4566/?Action=Unsubscribe&SubscriptionArn=arn:aws:sns:us-east-1:000000000000:update_shipment_picture_topic:a1e8059d-6404-4a94-89c9-6fab958f2b64"}

In the superclass, the overrideConfigs method is annotated with @DynamicPropertySource. This annotation is used in integration tests with Spring Boot to override application configuration properties dynamically.

@BeforeAll()

protected static void setupConfig() {

localStackEndpoint = localStack.getEndpoint();

}

@DynamicPropertySource

static void overrideConfigs(DynamicPropertyRegistry registry) {

registry.add("aws.s3.endpoint",

() -> localStackEndpoint);

registry.add(

"aws.dynamodb.endpoint", () -> localStackEndpoint);

registry.add(

"aws.sqs.endpoint", () -> localStackEndpoint);

registry.add(

"aws.sns.endpoint", () -> localStackEndpoint);

registry.add("aws.credentials.secret-key", localStack::getSecretKey);

registry.add("aws.credentials.access-key", localStack::getAccessKey);

registry.add("aws.region", localStack::getRegion);

registry.add("shipment-picture-bucket", () -> BUCKET_NAME);

}

The properties are added to the DynamicPropertyRegistry, which allows you to define dynamic properties at runtime. We already know these properties from the application, including endpoints for S3, DynamoDB, SQS, SNS, AWS region, and the picture bucket name. The AWS-specific values are obtained from the LocalStack instance.

Tests

First things first, running the LocalStack container.

@Container

protected static LocalStackContainer localStack =

new LocalStackContainer(DockerImageName.parse("localstack/localstack:2.1.0"))

.withEnv("DEBUG", "1");

A LocalStackContainer is being created, which will be managed by the @Container annotation that ensures that the container is started before the test executions and stopped after the tests are complete. It also tells JUnit to notify this field about various events in the test lifecycle, which can be useful when you want to reuse the same container instance across different test scenarios. The container is configured with a specific environment variable using the withEnv method to set DEBUG to 1, which will enable more verbose logging within the LocalStack service.

Once the application starts and the container is up, the testing can begin by using the TestRestTemplate to make calls to the endpoints.TestRestTemplate allows you to interact with your RESTful API in integration tests by making HTTP requests and receiving responses. It provides a higher-levelAPI compared to RestTemplate, making it more straightforward to write your calls.

ResponseEntity<String> responseEntity = restTemplate.exchange(BASE_URL + url,

HttpMethod.POST, requestEntity, String.class);

ResponseEntity<byte[]> responseEntity = restTemplate.exchange(BASE_URL + url,

HttpMethod.GET, null, byte[].class);

Since the lifecycle of the container is tied to the test class, we'll use the @TestMethodOrder annotation to execute the tests in the order that we specify to make the most out of the sequential workflows.

We call all the endpoints and expect to get an HTTP 200 status code, but we also need to ensure that certain payloads will fail, so we need to check for edge cases and ensure all scenarios are covered.

assertEquals(HttpStatus.OK, responseEntity.getStatusCode());

assertEquals(HttpStatus.INTERNAL_SERVER_ERROR, responseEntity.getStatusCode());

For some cases, like uploading an object to an S3 bucket, the assertion scenarios are straightforward: if the upload succeeds, we get an OK status and check if retrieving the object gives us a non-null result. When it fails, it's because the entity for which we provided an ID does not exist in the database. We are expecting this behaviour, and so we test for it.

The Lambda function is responsible for adding a watermark to the uploaded picture. That means that the object that was processed is not the same as the one that went in. To verify this, we apply a hashing algorithm on the object before any manipulation and after, and we compare the two values by asserting that they're not equal.

var originalHash = applyHash(imageData);

//create request entity with imageData and make the POST call

ResponseEntity<byte[]> responseEntity = restTemplate.exchange(BASE_URL + getUrl,

HttpMethod.GET, null, byte[].class);

assertEquals(HttpStatus.OK, responseEntity.getStatusCode());

var watermarkHash = applyHash(responseEntity.getBody());

assertNotEquals(originalHash, watermarkHash);

To verify the notification message passes through SNS and SQS to announce processing is finished, we call the server-sent events endpoint to make sure it arrived there. This is the endpoint the frontend application subscribes to, so it gets notified when to refresh with the new information. What we expect to see in the message is the entity ID that goes in with the picture.

var shipmentId = "3317ac4f-1f9b-4bab-a974-4aa9876d5547";

var sseUrl = "/push-endpoint";

ResponseEntity<String> sseEndpointResponse = restTemplate.getForEntity(BASE_URL + sseUrl,

String.class);

assertEquals(HttpStatus.OK, sseEndpointResponse.getStatusCode());

assertNotNull(sseEndpointResponse.getBody());

assertTrue(sseEndpointResponse.getBody().contains(shipmentId));

These are just a few examples of the main workflows that should be kept in check; some other fine-grained aspects you can find in the repository.

Steps to run your tests

- Build the Lambda function:

Step into the shipment-picture-lambda-validator folder and mvn clean package shade:shade

- Run the test classes:

From the root folder: mvn test -Dtest=ShipmentServiceIntegrationTest or use your favorite IDE for a nice green stack of tests.

Wrapping up

Integration testing is complicated as it is, even more so when third-party services are involved, but cloud-native AWS applications that rely on managed services are especially tough to test.

Now that LocalStack is an official Testcontainers module, you can supercharge your integration tests and vastly increase the test coverage of your application, without any need for mocking or remote AWS sandbox accounts. It simplifies the container configuration, lifecycle management, and clean-up process by leveraging the Testcontainers core libraries and modules specific features. Additionally, the @DynamicPropertySource methods enable dynamic configuration of the application to use the containerized dependencies. Overall, the combination of LocalStack and Testcontainers offers great advantages, such as isolation, speed, cost, and flexibility, making it effortless to start writing integration tests for your cloud application.

Further reading

Testcontainers LocalStack module - https://java.testcontainers.org/modules/localstack/

LocalStack user guides - https://docs.localstack.cloud/user-guide/

Testcontainers workshop - https://github.com/testcontainers/workshop